Menu

- Firefox Source Github

- Github Native Client Patch Download For Firefox Windows 10

- Firefox Os Github

- Mozilla Firefox Github

Finally, after extraction, the download script will appy the patches required to build under Native Client: patch -p1 nacl_quake.patch Building ----- Do NOT run./configure -- a Native Client Makefile is already provided. If the configuration script is run, it might overwrite the provided Makefile.

Join GitHub today

GitHub is home to over 40 million developers working together to host and review code, manage projects, and build software together.

Sign upHave a question about this project? Sign up for a free GitHub account to open an issue and contact its maintainers and the community.

By clicking “Sign up for GitHub”, you agree to our terms of service and privacy statement. We’ll occasionally send you account related emails.

Already on GitHub? Sign in to your account

Comments

commented Nov 20, 2017

I created (unofficial) PKGBUILD files for Arch Linux, which can be downloaded here: If desired to include such files in the main repository or at the Arch User Repository, I am happy to submit a pull request. |

commented Nov 21, 2017

Thanks for that @stes ! I had a quick look, and I think that if you want to include that into AUR, however, you might require much more work. I guess it's not a good idea to package binaries built somewhere else, so I think the best course of action would be to rebuilt for Arch on your side, including Tensorflow. Which main repo are you referring to, Mozilla's one or ArchLinux' one? |

commented Nov 21, 2017

@stes I see there's ArchLinux on (Dockerhub)[https://hub.docker.com/r/base/archlinux/], so you can have that as a base image on TaskCluster. So maybe you could do a PR that includes your work to build and produce an ArchLinux package ? |

commented Nov 21, 2017

Hello @lissyx , thanks for responding that fast!

Actually the packages I provide in the repository are build by me for Arch Linux (using your 'official' build instructions) to make sure to compile against the most recent version of libraries etc.

I looked at the dockerhub images, that seems to be the right way of building the binaries in the future. I suggest to wait until the pre-trained models are up and I am happy with the packaging process on my own machine. |

commented Nov 21, 2017

@stes Perfect, I don't know ArchLinux very well, and reading the Makefile or PKGBUILD I could not find where you perform the actual build :). If you do a PR against our TaskCluster changes to produce packages, you'll need to flag me or @reuben as a reviewer first ; PR from non collaborators cannot trigger TaskCluster process (for security reasons), so we need to take a first look and trigger for you (for now). We can help you for that part, though. |

commented Dec 14, 2017

@stes Would you like to make a PR that at least links to where people can find your packages? We could add that in the README, like we did for Rust and Go bindings. |

commented Dec 14, 2017

@lissyx yes of course, added #1109 I put it just under the Rust and Go bindings, although it is technically not about bindings, but I wanted to prevent clutter in the README. I can update the PKGBUILD in the next days to use the most recent version of the deep speech model and also include a download procedure for pre-trained models. I wanted to let the release settle a bit before packaging anything. Once that works, I can look into the TaskCluster build (for that I will probably approach you again). |

commented Dec 14, 2017

Thanks! We should be doing a dot release soon, I hope :) |

commented Feb 25, 2018 • edited

edited

I currently try to fix the deepspeech PKGBUILD on AUR with the latest version. The problem is that the readme tagged with 0.1.1 is outdated and the update in the master is to new for version 0.1.1. So I had to guess some build options, but it still fails. Can anyone please help me how to properly build 0.1.1? The previous version compiled fine (but with some security problems of the binary itsef). |

commented Feb 25, 2018

@NicoHood Please stick to v0.1.1, and document exactly your issues. From what I'm reading, you are mixing v0.1.1 with master TensorFlow ? Please use r1.4 branch from mozilla/tensorflow with DeepSpeech v0.1.1 |

commented Feb 25, 2018 • edited

edited

I am happy to use a fixed tensorflow version/branch. The problem was I did not know about this branch fits to 0.1.1. Where can I find this information for future builds? This branch fails at the version check: |

commented Feb 25, 2018

@NicoHood This is an upstream TensorFlow issue, you should try lower versions of Bazel. We sticked to 0.5.4 for some time, and this was working with this specific branch, and I explicitely remember that some people were running into issues back in the days with Bazel ~0.7. |

commented Feb 25, 2018

@NicoHood I know it might be inconvenient, this is also why I've opened #1253 and related upstream issues to see how we can improve stuff. In the specific case of Bazel versions, you should refer to TensorFlow instructions as we link them in our README: https://github.com/mozilla/DeepSpeech/blob/master/native_client/README.md#building |

commented Feb 25, 2018

Hm, this gets too complicated for what its worth then. What about the master branch of deepspeech, with which version of tensorflow can I compile this? 1.5? Also we have tensorflow 1.5 in our official repositories, can I somehow reuse those compiled .so files? Compiling everything takes extremely long. What about the changes you made in your mozilla branch? Can you push them generic to upstream so no special fork is requried? |

commented Feb 25, 2018

@NicoHood Yes, current master is bound with r1.5. You need to rebuild, because we switched to monolithic builds. Our changes are easy to find: it's mostly about RPi3 cross-compilation and tfcompile. If you use upstream TensorFlow, it's going to choke on some definitions. Pushing this to upstream is not that trivial .. |

commented Feb 25, 2018

@NicoHood Besides, I don't see what is complicated, just use a local install of Bazel v0.5.4 and that should work, playing with bazel's --output_user_root and --output_base.However, it's worth noting it does still require an Alexa-enabled device such as an Echo Dot in your home so it doesn't work on its own. Destiny 2 latest patch download size. This WiFi enabled speaker looks like in-game Ghost and even uses Nolan North's voice to respond to your enquiries. |

commented Feb 25, 2018 • edited

edited

@NicoHood If you want to stick to upstream, you can just patch native_client/BUILD file to remove the definitions of deepspeech_model_core, tfcompile.config, tfcompile.model and libdeepspeech_model.so.Building from scratch only our stuff (CPU build) is completed in about 600-800 secs on my desktop (i7-4790K). |

commented Feb 25, 2018

How would I start the build then? I installed tensorflow and modfied BUILD like this (not sure if that was correct) How would I start the build and link to the system tensorflow install? |

commented Feb 25, 2018

@NicoHood This is documented in the native_client/README.md, you need to symlink from TensorFlow's source to native_client/: https://github.com/mozilla/DeepSpeech/blob/master/native_client/README.md#preparation |

commented Feb 25, 2018

@NicoHood Please note you still need to build using --config=monolithic --copt=-fvisibility=hidden for libdeepspeech.so. |

commented Feb 25, 2018

But I only can do this at the same time when I am also building tensorflow. So I need to package tensorflow at the same time when also packaging deepspeech. I though this can be reused with an already installed tensorflow package, does not seem so. Not sure how this can be speed up then. |

commented Feb 25, 2018

The readme does not note those options you mentioned. They also do not state the bazel version nor the tensorflow version. https://github.com/mozilla/DeepSpeech/tree/master/native_client#building |

commented Feb 25, 2018

@NicoHood No, you don't need to package tensorflow at the same time. It's all statically compiled into libdeepspeech.so |

commented Feb 25, 2018

@NicoHood As I said earlier, the Bazel versions requirements are coming from TensorFlow, not from us. |

commented Feb 25, 2018

@NicoHood The flags are properly documented: https://github.com/mozilla/DeepSpeech/tree/master/native_client#building: |

commented Feb 26, 2018

I also tried the latest version of deepspeech master which also fails, but this time with a runtime error: I am wondering why the alphabet now causes problems. Maybe you changed the format!? It seems its better to wait for the next release. Feel free to contact me before you tag a new release, I am happy to test it for Arch Linux :) |

commented Feb 26, 2018

You are passing arguments in the wrong order, wav should be the last one. You also have not setup git-lfs as documented so the language model cannot be read correctly (last error). |

commented Feb 26, 2018

Oh what a dump mistake X_x. It now works: Do I really need git lfs? I only compiled deepspeech as described in the readme, I did not train a model. I just used the model from 0.1.1. Do you know how to get rid of the warning Your CPU supports instructions that this TensorFlow binary was not compiled to use: SSE4.1 SSE4.2 AVX AVX2 FMA? The processing is quite slow on my i7, I guess that is due to this missing optimization? |

commented Sep 21, 2018

@NicoHood In such kind of setup, you would have the model already loaded except if you want to call the binary each time, depending on the infrastructure you are working on. One would need to precisely profile to understand .. |

commented Sep 21, 2018

With the native client from you, and libsox3 as you explained: Seems to be no difference |

commented Sep 21, 2018

@NicoHood Can you check on your side what results you get when you remove language model and trie file? |

commented Sep 21, 2018

@NicoHood In particular, please note the times between the versions and the time displayed at Running, there might be something bogus we do with the LM. |

commented Sep 21, 2018 • edited

edited

It is faster, but the output/result is not as precise: |

commented Sep 21, 2018

@NicoHood Thanks! That's progressing! Do you mind doing the same with the stderr redirection and piping everything into ts, just to get a better granularity? And give a try to the pbmm, even though I don't think it's going to have a huge impact now. |

commented Sep 21, 2018

what is ts? Do you mean with debug symbols? |

commented Sep 21, 2018

It's a small binary that is able to timestand anything piped on its stdin. On debian, it's packaged in moreutils :) |

commented Sep 21, 2018 • edited

edited

Edit: This does not represent the actual timings. ts seems to work wrong. |

commented Sep 21, 2018

@NicoHood Thanks, and with the --lm and --trie ? And .pbmm file? |

commented Sep 21, 2018

commented Sep 21, 2018

@NicoHood Thanks, so that's what I'm seeing, and it's not good. So far, I doubt it has anything to do with threading and TensorFlow, but we should look into that :) |

Closed

commented Sep 28, 2018

@NicoHood So, the increased loading time comes from how we read the trie file and given it's size increase with newer model. |

commented Sep 28, 2018

@lissyx I have no knowledge about that. Is there something that I can use to try out any fix? |

commented Sep 28, 2018

@AtosNicoS Nope, it's just to keep you uptodate that we are looking into that |

Firefox Source Github

commented Oct 1, 2018

@AtosNicoS Looks like just changing the way we read and write the TRIE file helps good enough: #1610 (comment) and #1610 (comment) Super robot taisen d english patch download pc.The PR also features a trie_load simple binary that just performs the trie loading, running that on the old file format is about 4.5sec, and with the new, it's around 1.5sec. |

commented Oct 22, 2018

Are there any plans for the 2.1 release? Also where can I find the language models for other languages, such as german? I've seen data is being collected on the website. |

commented Oct 22, 2018

@NicoHood What kind of plan do you look for ? For the other languages, there's not enough data being collected yet from Common Voice. |

commented Oct 22, 2018

As you can see here, 0.3.0 is close https://github.com/mozilla/DeepSpeech/projects |

Closed

commented May 19, 2019

Per the other ticket linked just above, libdeepspeech and deepspeech-models are now in the AUR. The packages created from this discussion are useful but are out-of-date and should be bumped by their maintainer(s). @NicoHood I think you own at least one of those?? |

commented May 20, 2019

Yes I own the package, but I did not check it for a long time. I am happy to disown the package or add another co-maintainer. |

commented Jul 19, 2019

Could you update to 0.5.1 ? The 0.5.0 is bogus |

commented Jul 19, 2019

@lissyx Done. |

commented Jul 20, 2019

@9define May you please claim https://aur.archlinux.org/packages/deepspeech/ ? It doesn't build since a long time. |

commented Jul 21, 2019

@chazanov While it would be nice to get that working, I am unable to claim that package at this time due to a lack of time. @NicoHood ping re that upkeep, it's been awhile.. |

commented Jul 30, 2019

@9define what is your username on AUR? gobennyb? |

commented Jul 31, 2019

@NicoHood yes, but please do not add me to packages without first consulting me. As I mentioned, I am unable (and unwilling) to [co-]maintain that package at this time, so please work on that yourself/abandon it/look for other help. |

commented Jul 31, 2019

Oh, I overlooked that part, sorry. I disowned the package. |

Sign up for freeto join this conversation on GitHub. Already have an account? Sign in to comment

Open-in Native Client

Important Note:

Native client patches provided in this GitHub repo work ONLY with the addons listed in https://mybrowseraddon.com/ website. If you are directed to this repo from any other sources (website, add-on, plug-in, app, etc.) please proceed with caution when using those products, as, they will NOT work with patches provided in this repo.

Currently, ONLY the following open-in products work with the native client patches in this repo:

Github Native Client Patch Download For Firefox Windows 10

- PDF Tools: http://mybrowseraddon.com/pdf-tools.html

- Media Tools: http://mybrowseraddon.com/media-tools.html

- Open in IE™: http://mybrowseraddon.com/open-in-ie.html

- Open in VLC™: http://mybrowseraddon.com/open-in-vlc.html

- Open in PDF Viewer: http://mybrowseraddon.com/open-in-pdf.html

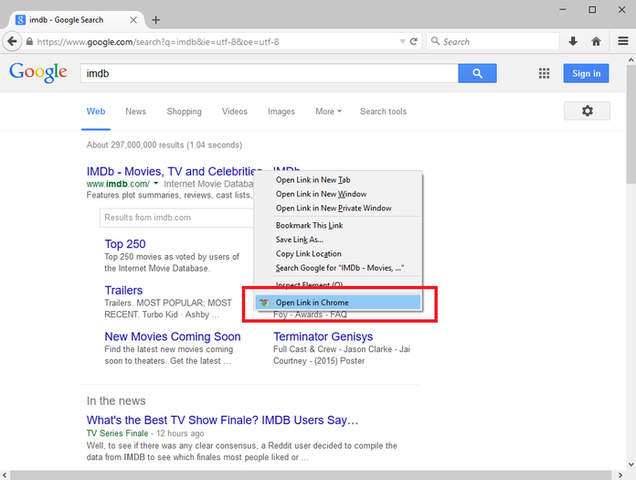

- Open in Chrome™: http://mybrowseraddon.com/open-in-chrome.html

- Open with Internet Download Manager: http://mybrowseraddon.com/open-with-idm.html

- Multi-threaded Download Manager: http://mybrowseraddon.com/multithreaded-download-manager.html

How to work with native-client:

Native client patch is used for connecting your browser (Firefox, Chrome and Opera) with native applications on your machine (Windows, Linux and Mac). If you have an add-on in your browser that needs to communicate with an external application on your computer, this native client patch can be used to easily make this connection.

In order to install 'native-client' on your system please follow the below steps.

- Please head to releases folder 'https://github.com/alexmarcoo/open-in-native-client/releases', download and extract the related ZIP file to your machine. If you have windows OS, please download 'win.zip', for Mac OS, use 'mac.zip' and for Linux please use 'linux.zip'.

- Open the downloaded folder and then click on 'install.bat'. You can open 'install.bat' with any text editor to see the inside in case you are interested.

- Wait for the screen to display the successful message.

- Now the add-on in your browser is fully connected to native applications (i.e. a media player) on your machine.

In order to uninstall 'native-client' from your system, please follow the below steps.

Firefox Os Github

- Open the downloaded folder and then click on 'uninstall.bat'.

- Wait for the script to display the successful message.

Mozilla Firefox Github

Note: the native-client in this repository is forked from: https://github.com/openstyles/native-client